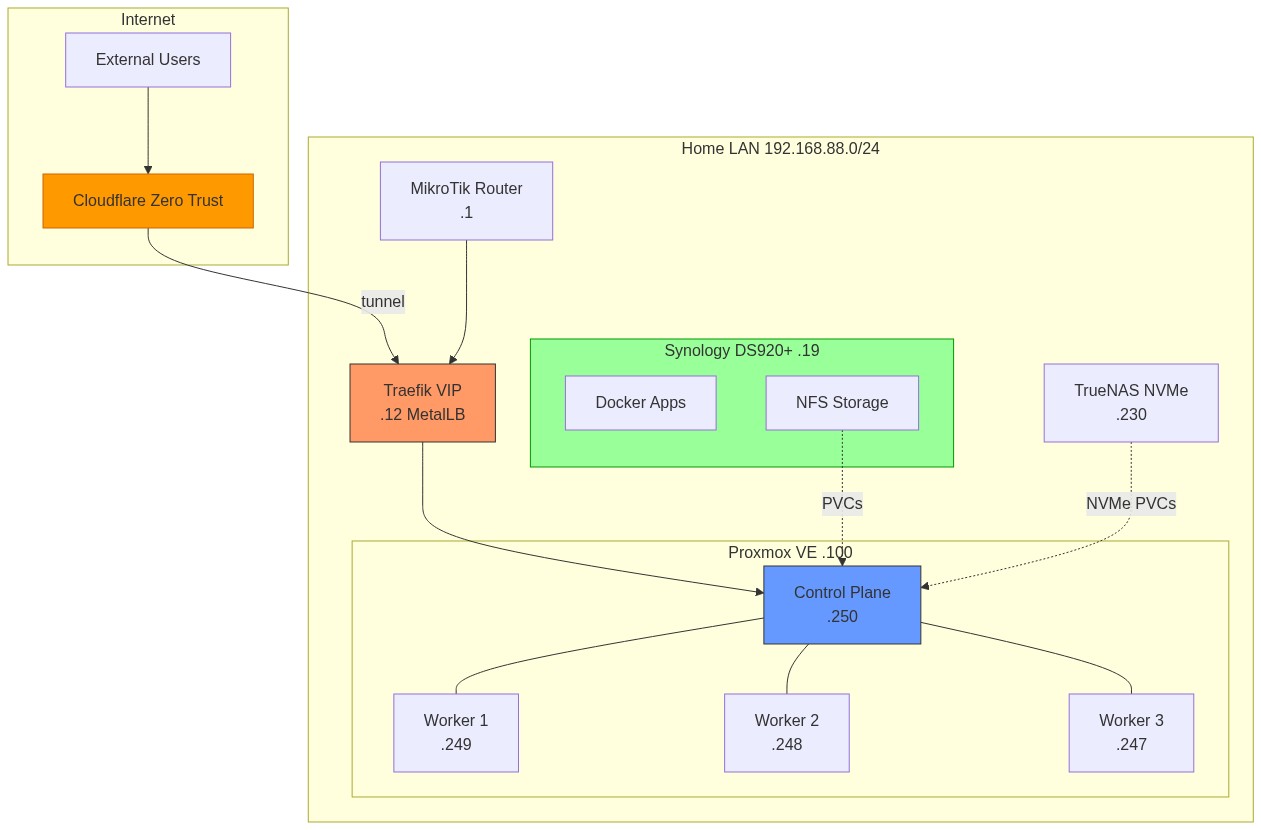

¶ Architecture & Network Topology

¶ High-Level Infrastructure Diagram

graph TB

subgraph Internet

USER[LAN Clients]

EXTUSER[External Users]

CF[Cloudflare Zero Trust]

end

subgraph Home Network

RT[MikroTik Router .1]

subgraph Proxmox VE .100

CP[Control Plane .250]

W1[Worker 1 .249]

W2[Worker 2 .248]

W3[Worker 3 .247]

end

subgraph Synology DS920+ .19

NFS_SVC[NFS Server]

DOCKER[Docker Apps]

MON_EXP[Exporters]

VW_CF[Vaultwarden cloudflared]

end

subgraph K8s Cluster

LB[Traefik VIP .12]

end

TNAS[TrueNAS .230]

UTIL[Utility Server .245]

CF_RT[cloudflared<br/>MikroTik container]

end

USER -->|DNS via Technitium| RT

USER -->|HTTP/HTTPS| LB

EXTUSER -->|vault.vyanh.uk| CF

EXTUSER -->|wiki status tracker ntfy| CF

CF -->|tunnel| CF_RT

CF -->|tunnel| VW_CF

CF_RT -->|routes internally| LB

RT --> LB

LB --> CP

CP --- W1

CP --- W2

CP --- W3

NFS_SVC -.->|NFS v4| CP

MON_EXP -.->|Metrics scrape| CP

style LB fill:#f96,stroke:#333

style CP fill:#69f,stroke:#333

style NFS_SVC fill:#6c6,stroke:#333

style CF fill:#f90,stroke:#333

style CF_RT fill:#f90,stroke:#333

style VW_CF fill:#f90,stroke:#333

¶ Cloudflare Tunnel — Public Hostnames

One managed Cloudflare tunnel runs as a container on the MikroTik router (app-cloudflared) and a separate dedicated tunnel runs inside the Vaultwarden compose stack on the NAS.

Tunnel 1 — MikroTik container (app-cloudflared RouterOS container, container IP 192.168.202.2):

| Public Domain | Routes To | Notes |

|---|---|---|

wiki.vyanh.uk |

WikiJS via Traefik homelab.vyanh.uk |

Via K8s Traefik LB |

status.vyanh.uk |

Uptime Kuma :3001 on NAS |

Via Traefik nas-ingress |

tracker.vyanh.uk |

LifeOps :80 in-cluster |

Personal asset tracker |

ntfy.vyanh.uk |

ntfy :2586 on NAS |

Push notifications, public-facing |

Tunnel 2 — Vaultwarden (cloudflared container in vaultwarden/docker-compose.yml on NAS):

| Public Domain | Routes To | Notes |

|---|---|---|

vault.vyanh.uk |

Vaultwarden :8843 on NAS |

Separate tunnel, independent of K8s |

Moving cloudflared to MikroTik means the tunnel stays up even during full K8s cluster outages, and removes K8s cluster resource consumption.

¶ Network Topology Table

| IP Address | Hostname | Role | Key Services |

|---|---|---|---|

| 192.168.88.1 | MikroTik Router | Gateway / Firewall / DHCP | NAT, DNS forwarding to Technitium |

| 192.168.88.12 | Traefik VIP | K8s Ingress (MetalLB L2) | All *.homelab.vyanh.uk traffic |

| 192.168.88.19 | Synology NAS | DS920+ Storage + Docker | NFS, Immich, Vaultwarden, etc. |

| 192.168.88.100 | Proxmox VE | Hypervisor | Hosts all K8s VMs |

| 192.168.88.230 | TrueNAS | Secondary NAS | Backup storage |

| 192.168.88.245 | Utility Server | Monitoring | SNMP exporter, PVE exporter |

| 192.168.88.247 | k8s-node-3 | K8s Worker | Workloads |

| 192.168.88.248 | k8s-node-2 | K8s Worker | Workloads |

| 192.168.88.249 | k8s-node-1 | K8s Worker | Workloads |

| 192.168.88.250 | k8s-cp | K8s Control Plane | API server, etcd, scheduler |

¶ DNS Resolution Flow

graph TD

CLIENT[LAN Client] -->|DNS Query| RT[MikroTik Router]

RT -->|Forward| TC[Technitium Primary/Secondary]

TC -->|Blocked?| BLOCK[Return NXDOMAIN]

TC -->|Local DNS?| LOCAL["Return 192.168.88.12<br/>(Traefik VIP)"]

TC -->|External query| ROOT[Root DNS Servers via DoH]

ROOT --> TLD[TLD Nameservers]

TLD --> AUTH[Authoritative NS]

AUTH -->|Answer| TC

TC -->|Answer| CLIENT

K8S_POD[K8s Pod] -->|DNS Query| CDNS[CoreDNS]

CDNS -->|cluster.local| K8S_SVC[K8s Service]

CDNS -->|Custom hosts| CUSTOM["10.99.138.53<br/>homelab.vyanh.uk"]

CDNS -->|External| TC

EDNS[ExternalDNS] -->|Watch Ingress| ING[Ingress Resources]

EDNS -->|rfc2136 TSIG| TC

style BLOCK fill:#f66,stroke:#333

style LOCAL fill:#6f6,stroke:#333

Key Points:

- Technitium runs as primary (.11) + secondary (.13) in K8s (sync wave 6) plus a third instance on MikroTik (.53) for high availability

- Technitium forwards upstream via Cloudflare + Google DoH (DNS-over-HTTPS) for encrypted recursive resolution

- CoreDNS has custom host entries pointing

*.homelab.vyanh.ukto an in-cluster IP (10.99.138.53) so pods can resolve internal services - ExternalDNS watches K8s Ingress resources and auto-creates DNS records in Technitium via rfc2136 + TSIG (upsert-only policy)

¶ TLS & Ingress Flow

sequenceDiagram

participant Client

participant MetalLB as MetalLB (L2)

participant Traefik as Traefik Ingress

participant CM as cert-manager

participant LE as Let's Encrypt

participant CF as Cloudflare DNS

participant App as Backend Service

Client->>MetalLB: HTTPS *.homelab.vyanh.uk

MetalLB->>Traefik: Forward to VIP 192.168.88.12

Note over Traefik,CM: First request or cert renewal

Traefik->>CM: TLS cert needed

CM->>LE: DNS-01 challenge

LE->>CF: Verify TXT record

CF-->>LE: Verified

LE-->>CM: Certificate issued

CM-->>Traefik: TLS secret stored

Traefik->>Traefik: TLS termination (TLS 1.3)

Traefik->>App: HTTP to ClusterIP service

App-->>Traefik: Response

Traefik-->>Client: HTTPS response

Configuration Details:

- MetalLB IP pool:

192.168.88.10 - 192.168.88.30(Layer 2 advertisement) - Traefik: TLS 1.3 minimum, security headers middleware, rate limiting

- cert-manager issuers:

letsencrypt-dns01(ClusterIssuer) — primary, via Cloudflare API, supports wildcardsletsencrypt-prod(ClusterIssuer) — backup, HTTP-01 via Traefik

¶ GitOps Deployment Flow

graph LR

DEV[Developer] -->|git push| GH[GitHub]

subgraph GitHub Actions Pipeline

DETECT[Detect Changes] --> LINT[Lint + Test]

LINT --> GATE[CI Gate]

GATE --> BUILD[Kaniko Build]

BUILD --> SIGN[Cosign Sign]

SIGN --> SCAN[Trivy Scan]

SCAN --> UPDATE[Update Manifests]

end

GH --> DETECT

UPDATE -->|Push tag update| K8SREPO[k8s-cluster-config]

K8SREPO -->|Detect drift| ARGO[ArgoCD]

ARGO -->|Sync| K8S[Kubernetes Cluster]

K8S -->|Pull image| HARBOR[Harbor Registry]

BUILD -->|Push image| HARBOR

style GATE fill:#ff9,stroke:#333

style ARGO fill:#f96,stroke:#333

style HARBOR fill:#69f,stroke:#333

Flow Details:

- Developer pushes to

LifeOpsrepo on GitHub - GitHub Actions runs 6-stage CI/CD pipeline on self-hosted ARC runners

- Kaniko builds container images in-cluster (no Docker daemon needed)

- Images pushed to Harbor (

harbor.homelab.vyanh.uk/applications/) - Cosign signs images by digest with private key

- Trivy scans for HIGH/CRITICAL vulnerabilities

- Pipeline updates image tags in k8s-cluster-config repo

- ArgoCD detects the change and syncs to cluster

- Pods pull new images from Harbor

¶ Secret Management Flow

graph TD

TF[Terraform Cloud] -->|Create policies & roles| VAULT[HashiCorp Vault]

TF -->|Seed placeholders| VAULT

ADMIN[Admin] -->|Set real values| VAULT

VAULT -->|K8s Auth| VSO[Vault Secrets Operator]

VSO -->|Watch VaultStaticSecret CRs| K8S_API[K8s API]

VSO -->|Create/Update| SECRET[K8s Secrets]

SECRET -->|Mount as env/volume| POD[Application Pods]

subgraph Per Namespace

SA[ServiceAccount vso-auth]

VA[VaultAuth CR]

VSS[VaultStaticSecret CR]

SA --> VA

VA --> VSS

end

VSO --> SA

Key Properties:

- Per-namespace isolation: each namespace has its own Vault policy scoped to

kv/data/<namespace>/* - Automatic refresh: VSO syncs secrets every 60 seconds (configurable per VaultStaticSecret)

- Placeholder convention: all seeds use

"PLACEHOLDER_UPDATE_IN_VAULT"for easy grep - OIDC dual-path pattern: client secrets stored at both

authentik/<app>-oidcand<app-namespace>/oidc